Classification

Articles about training small language models for text classification tasks.

Knowunity — 50% LLM Cost Reduction

Replaced frontier model API calls with distilled SLMs, cutting inference costs by 50% without sacrificing quality.

Octodet — Customer Study

How Octodet uses distil labs to power their AI workflows.

We Benchmarked 12 Small Language Models Across 8 Tasks to Find the Best Base Model for Fine-Tuning

Qwen3-4B ranks #1 overall. Fine-tuned 4B matches or exceeds a 120B+ teacher on 7 of 8 benchmarks. A well-tuned 1B outperforms a prompted 8B.

Building a Local Agent for Email Classification Using distil labs & n8n

A 0.6B email classifier that auto-labels Gmail locally. 93% accuracy from 154 seed examples. Runs on localhost via Ollama + n8n.

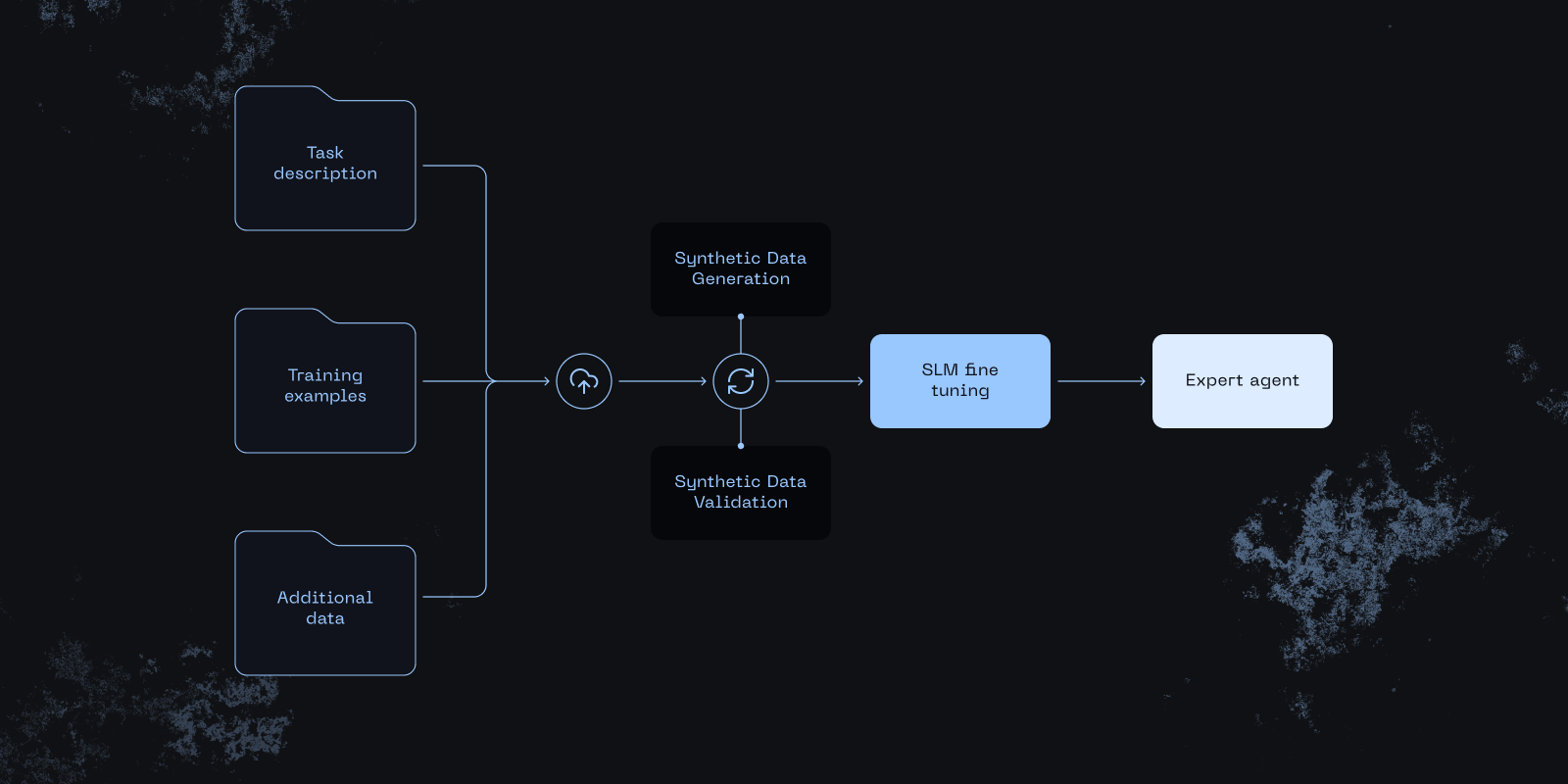

Vibe-Tuning: The Art of Fine-Tuning Small Language Models with a Prompt

From prompt to production-ready model — no datasets, no ML expertise, no GPUs. Automates data generation, distillation, and evaluation.

Benchmarking the Platform

Distilled students match or exceed the teacher LLM on 8 of 10 datasets across classification, NER, open-book QA, tool calling, and closed-book QA.

Small Expert Agents From 10 Examples

How the distil labs platform turns a prompt and a few dozen examples into a small, accurate expert agent — 50-400x smaller than LLMs.