Blog & Demos

Tutorials, case studies, benchmarks, and open-source demos — everything you need to build with small language models.

GitAra: How We Trained a 3B Function-Calling Git Agent for Local Use

A 3B function-calling model that turns plain English into git commands. Matches the 120B teacher at 92% accuracy — 25x smaller, runs locally via Ollama.

Vibe-Tuning: The Art of Fine-Tuning Small Language Models with a Prompt

From prompt to production-ready model — no datasets, no ML expertise, no GPUs. Automates data generation, distillation, and evaluation.

Benchmarking the Platform

Distilled students match or exceed the teacher LLM on 8 of 10 datasets across classification, NER, open-book QA, tool calling, and closed-book QA.

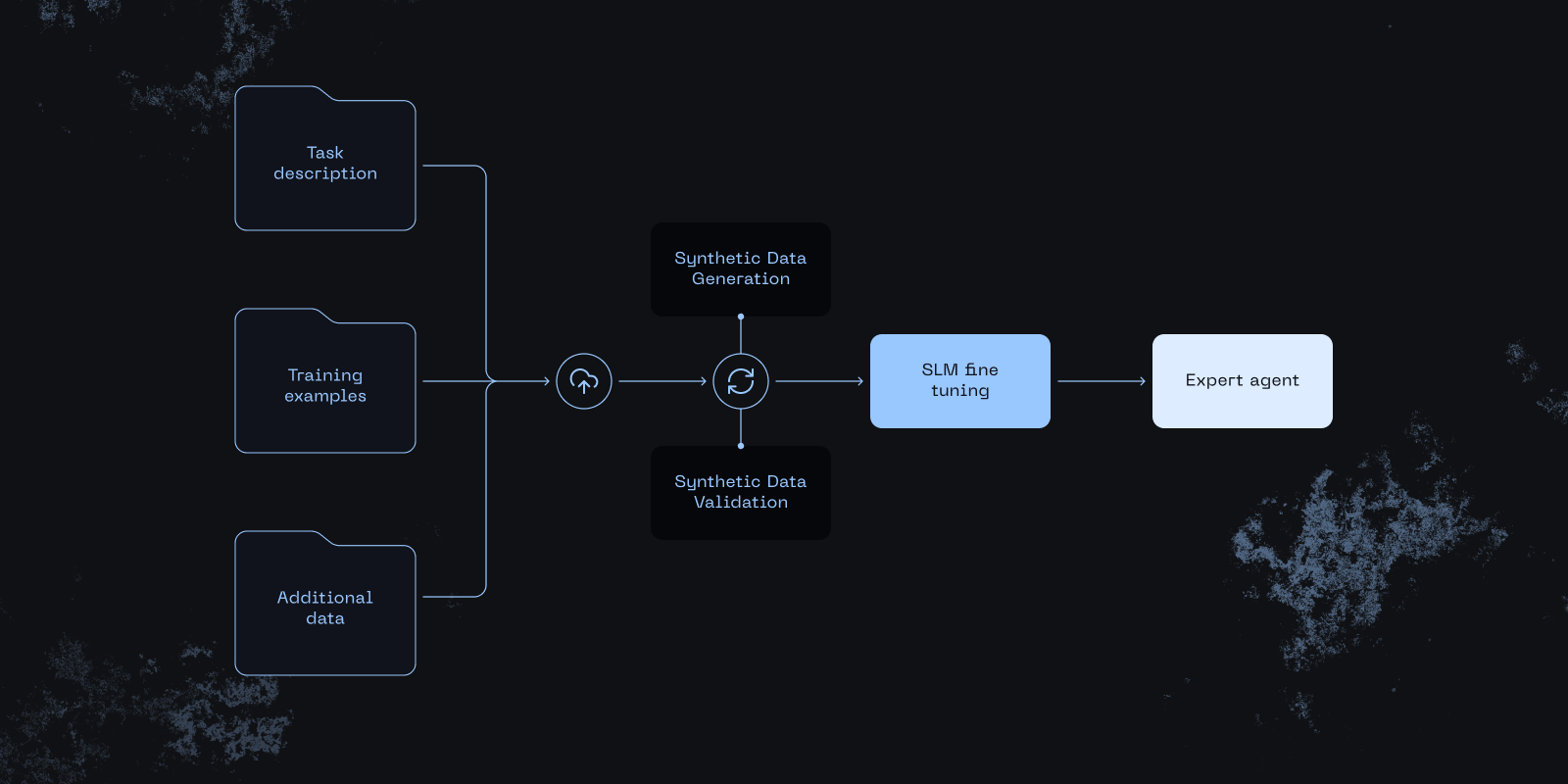

Small Expert Agents From 10 Examples

How the distil labs platform turns a prompt and a few dozen examples into a small, accurate expert agent — 50-400x smaller than LLMs.

Small Models, Big Wins: Using Custom SLMs in Agentic AI

Agentic systems don't need an LLM everywhere. When to use SLMs vs LLMs, common use cases, and the decision framework.